Key Metrics

Distributions

TBPN has been acquired by OpenAI! The show is staying the same and we’ll continue to go live at 11am pacific every weekday. This is a full circle moment for me as I’ve worked with @sama for well over a decade. He funded my first company in 2013. Then helped us fix a serious logjam during a critical funding round a few years later. When I took my second company through YC, he was president at the time, and then when I joined Founders Fund, the first deal I saw in motion was the post-ChatGPT round in late 2022. And as we started growing TBPN last year, he was the very first lab lead to join the show. Thank you to everyone that has been a part of TBPN until now. The last year has been the most fun and rewarding part of my career and we’re excited to have more resources than ever going forward.

Everyone is saying “this already exists!” “It’s fine tuning!” “It’s RAG!” “It’s NotebookLM” Wrong. He specifically said he wants to “log in” the data. I’ve never heard anyone pitch a custom LLM that allows you to “log all that in” - the opportunity is still real.

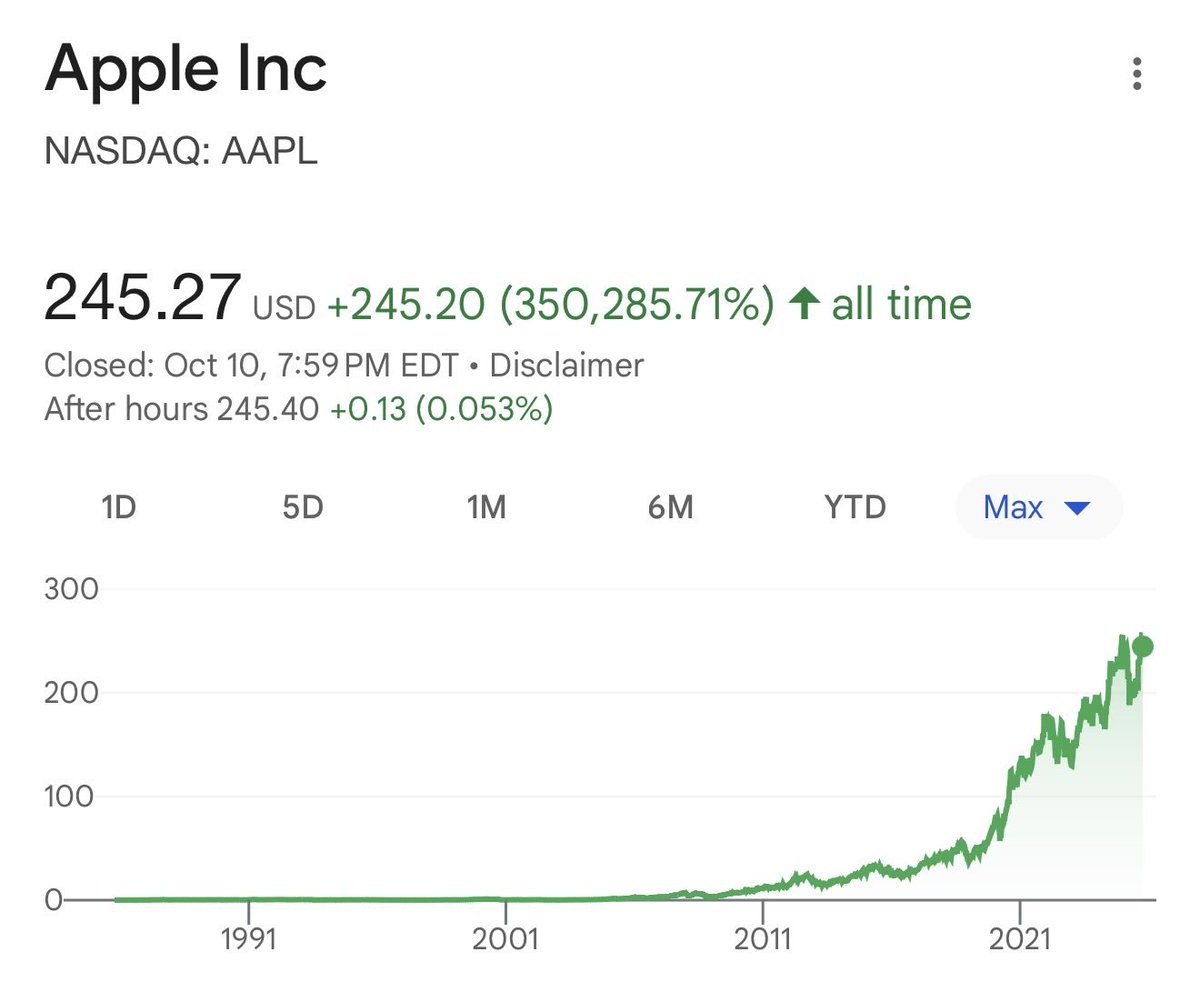

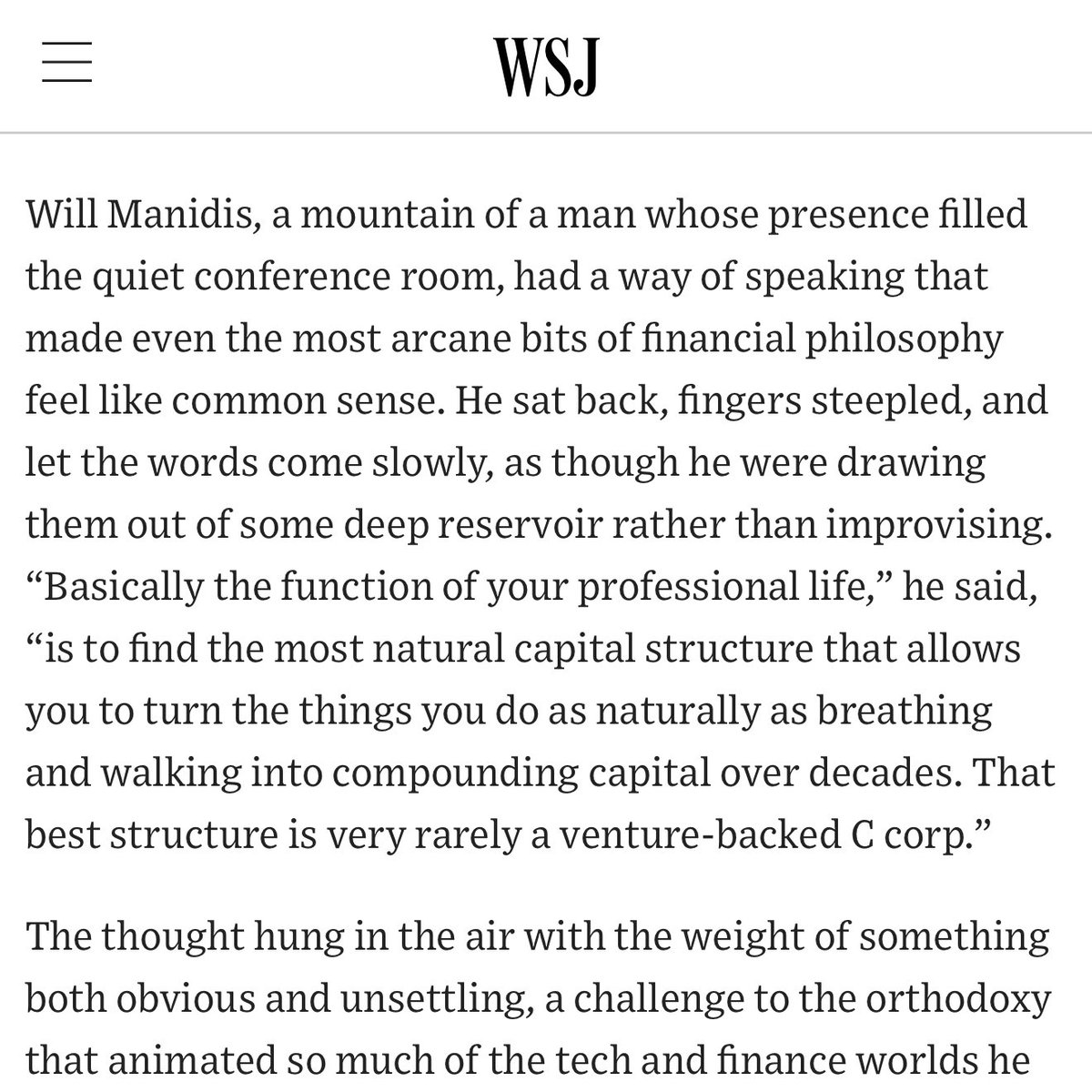

It’s over. Andrej Karpathy popped the AI bubble. It’s time to rotate out of AI stocks and focus on investing in food, water, shelter, and guns. AI is fake, the internet is overhyped, computers are pretty much useless, even the steam engine is mid. We’re going back to sticks and stones. Obviously it’s not actually that bad, but the general tech community is experiencing whiplash right now after the Richard Sutton and Andrej Karpathy appearances on Dwarkesh. Andrej directly called the code produced by today’s frontier models “slop” and estimated that AGI was around 10 years away. Interestingly this lines up nicely with Sam Altman’s “The Intelligence Age” blog post from September 23, 2024, where he said “It is possible that we will have superintelligence in a few thousand days (!); it may take longer, but I’m confident we’ll get there.” I read this timeline to mean a decade, which is what people always say when they’re predicting big technological shifts (see space travel, quantum computing, and nuclear fusion timelines). This is still earlier than Ray Kurzweil’s 2045 singularity prediction, which has always sounded on the extreme edge of sci-fi forecasting, but now looks bearish. There’s a whole chain of AGI-soon bears who feel vindicated by Andrej’s comments and the general vibe shift. Yann LeCun, Tyler Cowen, and many others on the side of “progress will be incremental” look great at this moment in time. This George Hotz quote from a Lex Fridman interview in June of 2023 now feels way ahead of the curve, at the time: “Will GPT-12 be AGI? My answer is no, of course not. Cross-entropy loss is never going to get you there. You probably need reinforcement learning in fancy environments to get something that would be considered AGI-like.” Big tech companies can’t turn on a dime on the basis of the latest Dwarkesh interview though. Oracle is building something like $300 billion in infrastructure over the next five years. If demand plateaus, there will be real financial consequences. The bull case is of course the other side of the Karpathy take. He didn’t just say “the models are slop,” he also said “the models are amazing” and “autocomplete is my sweet spot.” The shape of adoption around “amazing autocomplete” will determine the success or failure of the massive AI buildout. I like that Oracle is taking a big swing here, it’s exciting to see a nearly 50-year-old company go risk-on, but I don’t like how they are messaging their underwriting. In a CNBC interview, co-CEO Clay Magouyrk said, “Look at [OpenAI's] financials, their growth, and what’s being built with this technology. This isn’t a typical company trajectory. They’ve reached nearly a billion users faster than anyone. Everything about this is unprecedented — but in a good way.” He’s correct that this particular growth curve is unprecedented but I would have a lot more confidence if instead the argument was framed around previous technology booms and acknowledging the risk-reward tradeoffs. The dot-com bubble was a disaster, but Google and Amazon both made it through and became multi-trillion dollar enterprises. Vanderbilt became the richest man in America during the railroad boom. Bubbles pop, but fortunes are still made by the shrewdest players. It’s just important to make sure you have your feet on solid ground.

t.co/BKrzTZ3Shs

Calling it now, the hottest trend of 2026 will be dogged pursuits. Determined, persistent, and stubborn efforts to achieve things, refusing to give up despite difficulties, opposition, or discouragement, much like a dog relentlessly following a scent. Bookmark this.

Imagine getting from Los Angeles to San Francisco in under 90 minutes without ever stepping on a plane. Think it's impossible? Think again. It's 381 miles. The Bugatti Chiron goes 261 miles per hour. Do the math. The future is here. It's just not evenly distributed.

city tier list that will trigger everyone but is true S: nyc, la, sf, dc, miami, chicago, austin, boston A: seattle, nashville, vegas, new orleans, detroit, bakersfield, reno B: C: D: london, paris F: rome, berlin, tokyo, dubai, hong kong, barcelona, sydney, buenos aires

Yesterday, Anthropic released Claude Opus 4.5 and it delivered strong results across major benchmarks (SOTA on ARC-AGI, SWE-Bench, Computer Use, etc.). The model performed especially well on coding tasks. Gemini 3 had just set high bars in nearly every benchmark but the one that it couldn’t really crack was SOTA performance in coding (worse than Sonnet 4.5 on SWE-Bench Verified). It feels like Anthropic is a real lesson in the value of focus, the team hasn’t spread its attention across multiple (frankly quite lucrative) opportunities in productizing a leading AI foundation model (or lab that churns them out). The long-term thesis is still this idea that coding prowess will deliver AGI. The clarity there has created a talent magnet, a focused product in a profitable and new (but enormous) market, and staved off attacks from bigger companies with more funding. They haven’t even launched an AI image generator. Sholto Douglas, a researcher at Anthropic who came on the show yesterday, had a funny quote about it: “We believe there is no shortage of AI images.” Now to be clear, Anthropic does think that image understanding is important. Just as you’d want your human coworker to be able to look at the website they are building and see the results, Claude can look at a screenshot and understand what’s going on. Also notable is that they didn’t vaguepost about the launch. This strategy was basically created by OpenAI to create buzz around new launches and honestly it’s a lot of fun. I still think it’s funny to read into what Sam meant by posting a Death Star picture before launching GPT-5. DeepMind recently picked up the practice of vague posting and had some fun with it, but Anthropic eschewed the opportunity and ran a pretty by the book launch plan. A blog post with some clear benchmarks, a video explaining some of the results and early findings, etc. On AI safety, I’ve shifted my thinking a lot over the past year. I’ve come to appreciate safety research because of its applications related to understanding foreign influence that may occur from open-source models and the effects of long chatbot discussions with people who are under psychological stress. Neither of these scenarios was on my list of potential negative side effects years ago, but now they seem very real and worth taking seriously. Yesterday, Sholto highlighted a tradeoff Anthropic has been wrestling with around biology. No one wants a terrorist to build a bioweapon in their garage, but we all want top cancer researchers to be able to accelerate their workflows with helpful AI assistants. It’s very hard to put concrete timelines together for negative scenarios like “AI helps someone create a bioweapon” but I think it’s very good to have companies wrestling with these issues early and often. It’s clear that all that moral wrestling is a cultural feature, not a bug. The most widely viewed clip from our interview with Sholto was about Anthropic’s writing-focused culture. Apparently Dario is regularly posting essays on Slack threads explaining his full chain of thought on various issues facing the company. Shawn Wang from Cognition said, “this is the single strongest reason to join ant i have ever read.” Tyler on our team had some extra thoughts on the day, saying “Anthropic still feels very underhyped. Google is just now receiving its flowers in the public markets, and OpenAI has taken up most of the AI mindshare since the original ChatGPT launch. If you are AGI-pilled, Anthropic is probably where you want to work.”

Foreshadowing. OpenAI is the only company that can generate images of the Death Star now.

Traveling is overrated. What are you trying to escape from?

My steel man here is that: 1. Employees who haven’t reached a 1 year vesting cliff don’t have that strong of a claim around “I built this with sweat and need a liquidity event” and we don’t know the tenures of all the left-behind employees. 2. The real culprit here might be FTC antitrust. This is a hyper competitive market and Google STILL feels like they can’t just do a normal acquisition. 3. New facts might come out. Open invite to @_mohansolo to come on @TBPN Monday.

Women control 70% of household spending. But they only do 12% of the sports betting. Who’s building sports betting for women?

John Coogan (@johncoogan) X Stats & Analytics

John Coogan (@johncoogan) has 74.2K X followers with a 0.61% engagement rate over the past 12 months. Across 756 posts, John Coogan received 233K total likes and 39.9M impressions, averaging 308 likes per post. This page tracks John Coogan's performance metrics, top content, and engagement trends — updated daily.